In the ongoing D&D game I run for my friends every other Friday night, we’re taking a historical tour of the development and changes the game has seen throughout its forty year history. The Friday Night Group (as I affectionately, although less than creatively, refer to them) started in 1st edition Advanced Dungeons & Dragons taking on The Temple of Elemental Evil. So far, they survived the moat house ruins — The Village of Hommlet portion of the adventure — and converted to 2nd edition AD&D for their expedition to Nulb. Now, as the time nears for them to enter the temple itself, we’re converting to 3rd edition Dungeons & Dragons; leaving behind the 80’s and the 90’s, and entering the 00’s; and beginning our exploration of the d20 System. Why? Why not? My Friday Night Group game is a history lesson in D&D-ology. I had the good fortune to play the Basic/Expert version, the BECMI version, 1st edition AD&D, and 2nd edition AD&D… and the folks who play in the Friday Night Group had not.

I didn’t take my players back all the way to Basic/Expert or BECMI — we started with 1st edition AD&D and went forward from there because I guess I believe the AD&D systems (1st and 2nd editions) were the definitive versions of the game during their respective time periods… and they were the incarnations of the game I played most and thus the ones with which I’m most familiar. It was interesting to revisit those editions of the game — I was immediately reminded of the things I loved and the things I didn’t love about both of those incarnations. Most of what I didn’t like had to do with the sheer volume of cumbersome rules. I always got around the “great wall of rules” by focusing my games more on role playing and less on the average spread of percentile dice rolls necessary to determine what direction the spray from a half elf’s sneeze will go under a particular set of conditions. I’m just not in it for that sort of thing. I’m there for the creative part… the storytelling part… the social part. People who insist on more realism in their fantasy role playing games kind of baffle me. It’s a fantasy role playing game… you know, “fantasy”… it’s not supposed to be realistic. I look at it this way: rules should never get in the way of the enjoyment of the game… ever. This translates into me having little tolerance for “rules lawyers” and “griefers” because spoiling the enjoyment of the game is pure, unbridled bullshit. It also might mean that 3/3.5 edition and I might not get along too well.

I’ve studied 3/3.5 D&D, but I’ve never actually played it, much less DMed. From a mechanics stand point I find this edition very appealing, but the more I look at the combat system the more my brain starts to shriek. 1E AD&D was a booger for rules… thorough, but unnecessarily complex. 2E AD&D tried to slim down the core rules, and was mostly successful at first… but then it got bogged down by too many options. From what I’ve seen and learned about 3/3.5E so far, 3E went back to the 1E formula and became a woolly booger for rules and then 3.5E tried to disentangle it… with more or less success… Pathfinder might have licked that tangle… it could be considered 3.75E. 4E? Totally different monster… D&D with the role playing aspect reinterpreted to a strategic model as opposed to the kind of role playing that made D&D famous… (Infamous? That means “more than famous,” right? Whatever…)

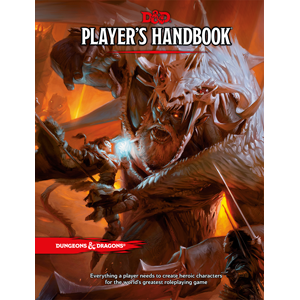

All this is a preamble… I’m sorry to say, it took me a little over 600 words to get to the point (which is not uncommon for me): I received my D&D 5E Player’s Handbook on Tuesday and I’m pleased. Is it perfect? No, of course not. Nothing touched by human hands can ever be perfect, and perfection is an illusion anyway. Okay, maybe a little too philosophical… sorry. I digress… yet again… I already know the 5E rules… I had a hand (very small hand… maybe not even pixie-sized) in the development of those rules, and they’re available for free as a PDF document on the Dungeons & Dragons website — anyone can download the rules and play the game… the “network television” version of D&D 5E. The Player’s Handbook constitutes the “basic cable” version, and the forthcoming Monster Manual and Dungeon Master’s Guide are the “full package cable with ‘on demand.'” I like this extensible version of D&D. I like the fast and easy rules, I like the emphasis on role playing, I like that Wizards of the Coast‘s D&D R&D team put the “table” back in table top RPGing…. they brought “table” back… they helped D&D get its groove back… Nope, no good… it just doesn’t have the right ring to it… but you know what I mean, I hope.

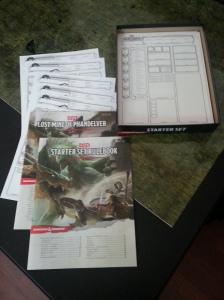

Dungeons & Dragons 5E is a game to play with your homies. Seriously. Gather four or five friends, break open a Starter Set (c’mon, it’s only $20… you can’t even get a proper buzz on $20 anymore), and get a game going. D&D is a social thing… that’s the magical quality that the original had, that the revised red Basic book and the revised blue Expert book had… that the boxed BECMI sets had… that the Rules Cyclopedia had. It was the magic that AD&D, in both of it’s overly complex manifestations, had. It’s why this damn game matters to so many people, and why they’re so God damned passionate about it even if they haven’t played in years. Uncles Gary and Dave left us with this ridiculously awesome legacy — they gave us the ability to make stories together, to create our own legends, and they gave us an excuse to forge friendships that are founded on shared imagination and trust.

With all that in mind, here now, is my review of the D&D 5E Player’s Handbook…

Do you need the Player’s Handbook to play the game? No, you don’t… not to play the essential D&D 5E. You can do that with an adventure book, like The Hoard of the Dragon Queen, and the Basic Rules downloaded from the D&D website. You can play with a $20 Starter Set and nothing else. So why invest $50 in a Player’s Handbook? I don’t know. I don’t work for WotC, so I’m not interested in selling the book to anyone except that I have the ulterior motive of wanting the game to stay around for a long time. I can tell you why I bought it: it extends the game, offers more options that give players a great variety of choices through which they can express their creativity. The Basic Rules give you the “iconic” races (human, elf, dwarf, and halfling), four core classes (cleric, fighter, rogue, and wizard), and six backgrounds (acolyte, noble, folk hero, criminal, sage, and soldier). This makes for a good set of fantasy character combinations. The Player’s Handbook gives you the “iconic” races and subraces from the Basic Rules plus drow elves, dragonborn, tieflings, gnomes, half orcs, and half elves. It gives you the core classes plus barbarian, bard, druid, monk, paladin, ranger, sorcerer, and warlock. There’s a plethora (heh, “plethora”) of backgrounds, and optional rules for things like multiclassing and feats.

Speaking of feats, one of the greatest feats the 5E Player’s Handbook pulls off is that the large menu of options it offers never feels overwhelming. I look at the splatbooks for 3/3.5E or the ones for 2E and those, at times, make my head swim. It’s not that the information in any those products is bad, it’s just not well organized. The 5E Player’s Handbook is very well organized with a very high production value and a lot of thought about how the game information is presented. Organized into three parts, the Player’s Handbook first guides you through making a character, then presents the information you need to play the game, and then gives you the rules for magic and an extensive selection of spells. Things are pretty easy to find in the book and I found that after my initial flip-through to gawk at the artwork, I was able to recall easily where certain pieces of information were located and go back to them.

The book is beautiful to look at. From an aesthetic standpoint, the art and illustrations presented here are likely the finest ever used in a D&D product. Tyler Jacobson (one of my absolute favorite fantasy artists) nails the cover with an homage to Against the Giants — King Snurre never looked so badass. Additionally, the book is also a tactile delight: the glossy cover with a textured, matte half-back cover; and the thick, durable interior pages make you not want to put the book down. The binding is solid, and I expect (especially for fifty fucking dollars) that my Player’s Handbook will stand up to some use.

Yes, the price point is a bit of a nit pick for me: $50 is a little steep. My teen players balked at the price tag when I told them how much the book cost, but of course they don’t need it because the Basic Rules are free (Did I mention that already?) and that’s what keeps me from declaring total bullshit over the cover price tag. Is the Player’s Handbook worth $50? All things considered, yes… yes it is. This is a high quality product that turns a good game (Basic Rules) into a great game… but I think Hasbro and WotC could have been a little more merciful with the price… just a little. I have to point out though that the Pathfinder Core Rulebook is also $50 and it includes the GM portion of the game. With D&D 5E, I still have to shell out another $50 for the Dungeon Master’s Guide when it comes out in November. Did I say, “I have to…?” That’s not entirely true. There’s a Basic Rules for DMs available for free as a PDF on the Dungeons & Dragons website, so the purchase of the Dungeon Master’s Guide is not absolutely required… but I wanted to point out the fact that the potential for another $50 expense can be perceived as an edge for Pathfinder which is D&D’s biggest competition. Personally, I’ll spend the money… I’m Team D&D. I like Pathfinder, but I owe that system no loyalty; it didn’t play a part in the formative years of my life.

I’m not going to devote much space to the game rules because you can see those for yourself without spending a dime — go forth and download the Basic Rules, learn the game, the end. I will say that this is one of the most accessible incarnations of D&D ever. Combat is covered in ten pages. No shit, in-ten-pages. The game rules are streamlined, boiled down to just the essentials but they never feel as if they don’t cover everything. D&D R&D simply added a brilliant little game mechanic called Advantage/Disadvantage which replaces the copious amount of situational modifiers found in earlier editions. No more +2 for being on higher ground, -2 for low light conditions, +4 for this, -1 for that… If your character is in an advantageous position or under favorable conditions, roll two d20s and take the higher of the two rolls — that’s Advantage. Unfavorable conditions, roll two d20s and take the lower of the two rolls — that’s Disadvantage. It speeds up the game and still gives players an edge when they have it, and a penalty when they don’t.

Have I convinced you? No? No big… That’s not my job. Within the context of this post, my job was simply to inform… and maybe entertain a little. I love this game… Ah, this fuckin’ game… If you’re inclined, I say “jump off the fence and give it a swing.” Don’t spend the $50 if you don’t want to: download the Basic Rules and learn the game first; see if you like it. Find a game on MeetUp or go to your local hobby/game/comic book store and find a game there. Better still: get a group of buddies together, chip in $5 – $6 each and go in together on a Starter Set and give D&D a spin that way. Trust me, if you like the game enough spending $50 on the Player’s Handbook will be a lot easier. If D&D is not your cup of tea, then you’re not out $50. My heart is a little colder because of it… but that’s life. This game isn’t for everyone, but for those with whom it connects that connection typically lasts for a lifetime.

Cheers!

You must be logged in to post a comment.